There is no foolproof way to predict an earthquake, though recent smartphone apps can provide warning for an area. Yet accurate prediction remains impossible.

NASA-led researchers are employing several promising approaches, such as examining electric clues and stress changes in rocks. Here are some of their findings:

The Rundle-Tiampo Scorecard

Seismologists have long struggled to predict large earthquakes with any degree of accuracy. Few studies have produced reliable predictive models; so far the most successful approach has been developed by John Rundle of UC Davis’ Physics Department and colleagues; they created “scorecards” which predict earthquake locations and times with an increasingly accurate accuracy over time; their most recent scorecard identifies areas likely to experience large earthquakes within a decade.

More: Dupont Museum | Washington DC Local T.V Station | Survey Monkey Quiz Mode | New Politics Academy | NASA Langley Visitor Center

Pattern Informatics (PI), is used by the scorecard to detect regions of anomalous seismic activity. PI analyzes changes in small-scale seismicity such as earthquakes with magnitudes below M5, to detect patterns indicating when faults are active.

Furthermore, anomalous seismicity can be identified by comparing current earthquake activity rates with background earthquake activity rates for that region, then identifying localized areas with either increased or decreased rates of activity.

Traditional methods of seismic data analysis rely solely on recording large earthquake amplitudes; unlike these newer methods which also take account of smaller events’ spectral energies and identifies aftershocks in terms of both frequency and timing, allowing seismologists to measure the duration of an earthquake cycle; more aftershocks mean longer earthquake cycles and therefore increased chances that a larger quake may happen sooner rather than later.

When PI detects a pattern of aftershocks, they compare each event’s time between occurrence to the average interval between large aftershocks in that region and determine whether a new cycle has started or whether an existing cycle continues – thus increasing the chances of future large earthquakes in that location.

This methodology has been thoroughly evaluated, with results published in Reports on Progress in Physics.

To compare predictions submitted by different participants using likelihood calculations of M>5 earthquakes occurring over a given test period (a perfect forecast would have lin=1 where an earthquake did indeed take place and 0 otherwise), this method provides an objective way to evaluate quality submissions.

Advanced Rapid Imaging and Analysis (ARIA)

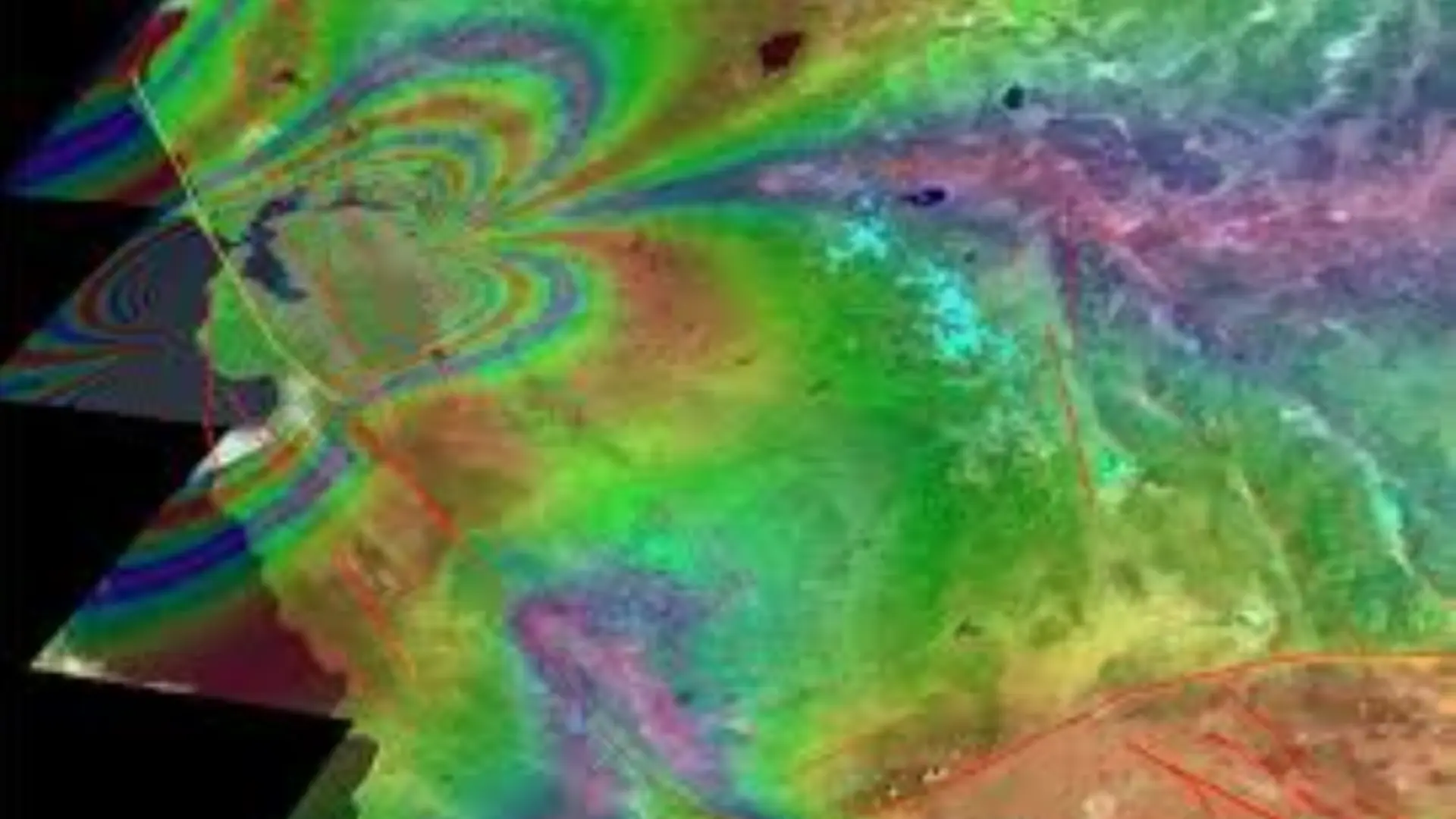

Geodetic imaging technologies such as interferometric synthetic aperture radar (InSAR) have revolutionized earthquake and volcano sciences, offering unprecedented ways to understand surface deformation, magma movement and disaster response in high spatial resolution.

While such observation systems offer promise for disaster response, their analysis requires complex labor-intensive analysis which results in maps of risk which could provide timely decision makers with useful data following an earthquake disaster – this manual process poses serious limitations to quickly producing reliable earthquake/volcanic hazard data products for immediate emergency response efforts.

ARIA is a JPL project that seeks to automate access and processing of satellite and GPS observations in order to produce science products for earthquake and volcano hazard monitoring, emergency response planning and risk assessment. This system employs image pixel tracking with GNSS measurements in order to map seismic activity and surface changes as well as track volcanic eruptions in real time.

Moderate to large earthquakes often produce a series of ground failures and impacts that result in landslides, liquefaction and building damage that can exacerbate casualties and disrupt lifeline services.

Rapid identification and estimation of hazards and impacts allows rapid response efforts to be focused where they’re most needed, eliminating wasteful responses that might waste resources on unnecessary responses.

Existing global and regional rapid seismic hazard and impact estimation efforts use satellite observations and advanced geophysical inversion methods to estimate coseismic deformation fields. These techniques can detect structural change such as ground failures or building damage, though their accuracy may be limited due to limited or unavailable observations.

ARIA seeks to address these limitations by employing satellite and GNSS observations – including image pixel tracking and differential global positioning system (DGPS) observations – to detect ground changes caused by physical factors like ground failures or building damage and estimate their causes such as ground failures or building damage.

ts maps can be used for improving situational awareness in immediate aftermaths of earthquakes as well as to assess hazard risk in communities needing support.

Tsunami Detection System

While earthquake prediction is impossible, scientists have made remarkable strides in tsunami detection. Thanks to this innovation, emergency response managers may now issue tsunami warnings within minutes of an event occurring – giving coastal dwellers enough time to take life-saving actions before it happens.

NASA-led projects have created early warning technology to detect tsunamis using satellite sensors, radar, and seismic waves. This system can potentially predict both size and direction of an imminent tsunami as it forms, providing officials with more time to prepare.

This technology is now being employed by NOAA’s Pacific Tsunami Warning Center as well as their National Tsunami Center; additionally it will be shared with Chile and New Zealand tsunami warning centers so they may develop similar systems themselves.

Scientists are working hard to improve the speed and accuracy of warnings. A 2017 study from Johnson’s lab demonstrated how machine learning could produce accurate warnings by listening to vibrations caused by faults – an algorithm even managed to identify signals which had previously been ignored as low-amplitude noise by human researchers!

This new method has the potential to be significantly more efficient than conventional physics-based models currently in use. A typical physics-based model takes 30-45 minutes to run, and its accuracy relies on source estimates that can introduce significant uncertainty.

As part of efforts to improve tsunami inundation predictions, several nations have deployed deep-ocean tsunami detectors capable of recording velocity and height data about incoming waves in real time, which can then be fed into forecast models to improve accuracy of predictions.

Even with these advances, sea level stations remain at the core of any tsunami monitoring system.

The Global Sea Level Observing Network (S-net) features around one station per 50 kilometers of coastline but some regions still lack dense coverage. Now there is noise-filtering technology available that can make old sensors more sensitive to tsunamis, potentially shortening warning times by 10 minutes or more.

QuakeSim

Seismology’s holy grail is earthquake prediction at times when they would be useful for public safety and damage mitigation.

See More: The Museum of Discovery | The Colorado History | Museum Northwest | Flagstaff Museum | Terry Bryant Accident Injury Law | Nevada Museum | Explore the Earth Surface

Scientists have attempted various avenues to achieve this goal, including studying warning foreshocks, magnetic field changes, unusual animal behavior patterns and observed periodicities, observed periodicities as well as stress transfer considerations; unfortunately none of these methods has yielded reliable predictions results.

Scientists can get very close to accurate earthquake prediction by forecasting the probability that an earthquake will strike at a specific location on a fault and in a given year – an approach known as probabilistic assessment of general seismic hazard. Such predictions play an essential role as they inform building codes and insurance rates that reduce earthquake risks.

NASA’s solid Earth science research seeks to increase the accuracy of probabilistic assessments by employing sophisticated computer models that combine seismic, GPS and high-precision geodesic radar measurements with 3-D simulations of fault behavior to identify regions with increased earthquake probabilities – known as hot spots – which will aid fault modeling techniques that will better predict earthquake locations in future.

Understanding how the crust deforms under the surface and changes the physics of shaking experienced by people on the ground can also improve earthquake prediction, providing crucial data that allows scientists and engineers to create and test new models as well as assess current prediction tools’ performance.

QuakeSim, developed at NASA’s Jet Propulsion Laboratory in Pasadena, California is one tool used by scientists to understand crustal deformation. It offers flexible data discovery and visualization tools as well as pattern recognition features to allow efficient mining of complex datasets for subtle features.

QuakeSim’s framework features three simulation tools – GeoFEST, PARK and Virtual California – which simulate different aspects of an earthquake cycle. Additionally, supporting components like federated databases, visualization tools and pattern informatics help both scientists and end user applications benefit from QuakeSim.

Frequently Asked Questions

While there’s no sure method of predicting earthquakes, NASA-led scientists are experimenting with various methods to enhance our knowledge and capabilities to forecast. They’re using methods such as the Roundle-Tiampo Scorecard as well as Pattern Informatics to identify regions susceptible to large earthquakes in the next 10 years. However, predicting accurately remains difficult.

The Rundle-Tiampo Scorecard was a method that was developed in the work of John Rundle and colleagues, that can predict earthquake times and locations with greater accuracy as time passes. It studies earthquake patterns and seismic activities, specifically smaller-scale earthquakes to identify the presence of faults and evaluate the possibility of large earthquakes to come in specific areas.

ARIA which stands for Advanced Rapid Imaging and Analysing, is an research project of NASA’s Jet Propulsion Laboratory. ARIA automatizes the processing of satellite and GPS observations to create scientific products for volcano and earthquake hazards monitoring as well as emergency response planning and assessment of risk. Through the monitoring of ground movements along with seismic activity real time, ARIA increases our understanding of the effects of earthquakes and assists in quick emergency response efforts.

Although it is difficult to predict earthquakes NASA-led initiatives have made substantial advances in tsunami detection. By using satellite sensors, radar and analysis of seismic waves scientists are able to issue timely warnings about tsunamis and help communities along the coast prepare for and reduce dangers.

Computer-aided learning techniques are currently being designed to analyse seismic data more efficiently and with greater accuracy. Through the analysis of vibrations induced due to faults programs are able to detect signals that were previously ignored by human scientists. This technology could be used to increase the speed and precision of earthquake warnings, compared to conventional model based on physics.

QuakeSim was developed by NASA’s Jet Propulsion Laboratory, is an instrument used by scientists to study crustal deformation and earthquake cycles. It includes simulation tools such as GeoFEST along with Virtual California, along with the ability to recognize patterns, to analyse large datasets and enhance our understanding of the behavior of earthquakes.

Probabilistic assessment is the process of forecasting the likelihood of earthquakes that occur in particular places and at specific times. NASA’s research blends earthquake, GPS measurement and radio measurements and 3-D simulations to pinpoint regions with greater earthquake probability, also known as hot areas. These assessments help to determine the building codes and rates for insurance and reduce earthquake risk for the communities they serve.